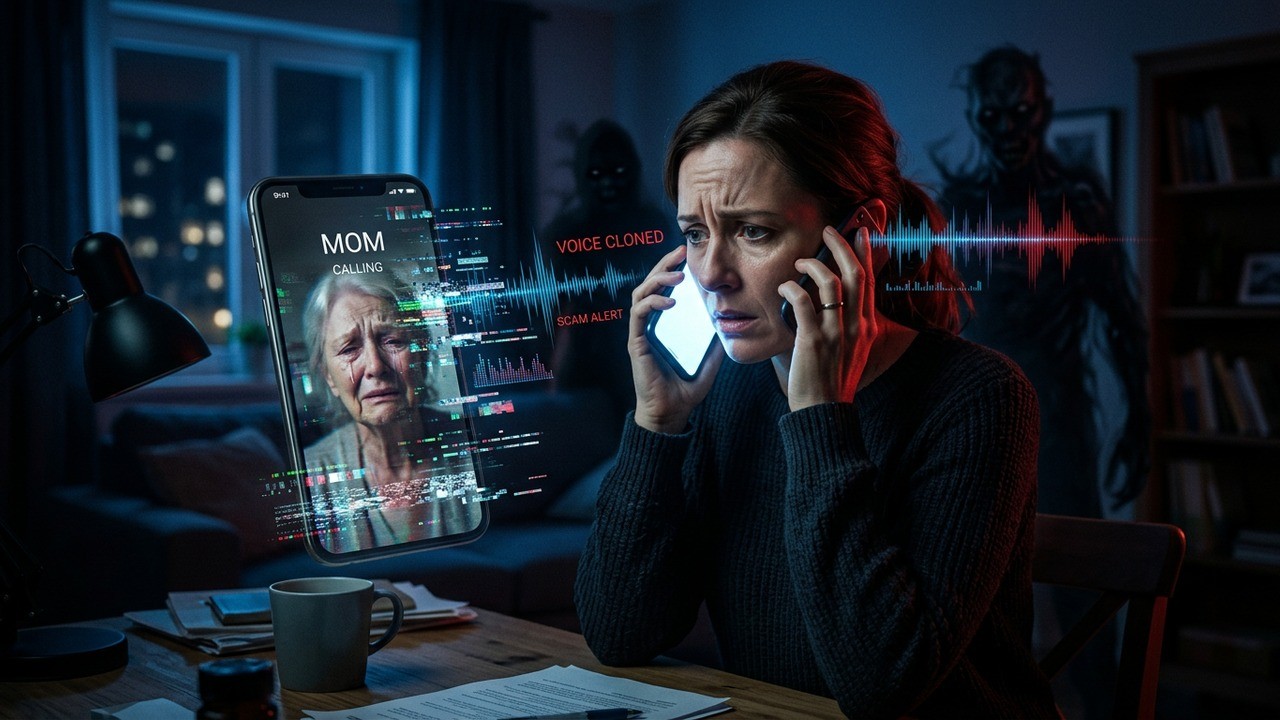

Imagine picking up the phone and hearing your child’s voice breaking with fear, begging for help. Your heart races, panic sets in, and before you can think clearly, a stranger takes over the line making threats. This isn’t a nightmare scenario from a movie—it’s happening right now to ordinary people because of advancing AI technology.

The way scammers operate has changed dramatically. What used to be obvious robocalls or poorly spoken attempts are now sophisticated operations using voice cloning to sound exactly like your loved ones. I’ve followed these stories closely, and the emotional toll they take goes far beyond financial loss. The fear and betrayal of trust linger long after the call ends.

The Rising Threat of AI-Powered Impersonation Scams

These scams prey on our most basic instincts—to protect our family. Callers use spoofed numbers that appear to come from your daughter’s phone, complete with her photo if your device shows caller ID images. Then comes the voice. It sounds exactly like her, complete with the emotional tremble or scared cry you would recognize anywhere.

One woman in Montana shared how she answered what looked like a call from her adult daughter. The voice on the other end was crying, saying “mom” in that specific way only her child does. Even though she had read about similar scams, the realism made her doubt everything. Moments later, a man joined the call, shifting from calm to aggressive, demanding money and warning against contacting police.

It was the most afraid I’ve ever been in my life.

– A mother who experienced an AI voice scam

Stories like this are becoming more common. Scammers no longer need long recordings. Sometimes just a few seconds of audio from social media videos or voicemails is enough to train AI models that can generate real-time conversation. They combine this with personal details easily found online—names, locations, workplaces—to create incredibly convincing scenarios.

How Voice Cloning Technology Makes Scams More Dangerous

The technology behind these calls has improved rapidly. Tools now require minimal input to replicate not just the sound of a voice but also emotional tones, speech patterns, and even background noises that make it feel authentic. This sophistication catches people off guard because our brains are wired to trust familiar voices.

Think about it. For most of human history, hearing your loved one’s voice on the phone was one of the strongest signals of truth. That assumption is breaking down. Scammers exploit this by creating urgency—claiming accidents, kidnappings, or medical emergencies that demand immediate action.

In many cases, they use a script that escalates pressure. They might start with the cloned voice in distress, then have another person take over with threats. The calls often involve multiple hang-ups and callbacks, keeping the victim in a heightened emotional state where rational thinking becomes difficult.

Real Impact on Families and Daily Life

The effects go well beyond the moment of the call. Families report increased anxiety, changes in phone habits, and even strained relationships as people become suspicious of legitimate calls. One person I learned about started double-checking locks and avoiding certain ringtones after her experience.

Children and parents now have code words to verify identity in emergencies. While this is a smart precaution, it also highlights how trust has been eroded by technology meant to connect us. The psychological manipulation involved makes these scams particularly cruel.

- Immediate panic and emotional distress during the call

- Long-term anxiety about answering the phone

- Changes in family communication protocols

- Potential financial losses if the victim pays

- Difficulty trusting future calls from loved ones

What makes this especially troubling is how scammers target the most vulnerable moments. They choose times when family members might be apart—work hours, travel, or late at night—when verification is harder. The industrialized nature of these operations means teams work across time zones, using shared databases- Noticing clear mismatch between relationship blog categories and AI scam calls topic of personal information.

Why Traditional Defenses Are No Longer Enough

Caller ID spoofing has been around for years, but combining it with AI voice generation takes the deception to another level. Even people who consider themselves cautious can fall victim because the sensory cues we rely on have been hijacked.

Police departments note that tracing these calls is extremely difficult due to international networks and sophisticated routing. Many operations run from overseas, making legal action challenging. This leaves families feeling helpless even after reporting incidents.

What has evolved in recent years is the level of sophistication.

That sophistication includes not just voice but also context. Scammers might reference recent family events or use names of grandchildren to build credibility. They create a complete story that feels personal and urgent.

Practical Steps to Protect Yourself and Your Family

The first and most important rule many experts now recommend is simple: don’t answer unknown or unexpected calls. Let them go to voicemail. If it’s truly important, the person will leave a message or try another method.

When you do need to verify, hang up and call back using a known number. Don’t use the callback function on your phone, as scammers can manipulate that too. Create family code words for emergencies—something specific that only your loved ones would know.

- Establish a family verification system with secret questions or code phrases

- Limit personal information shared on public social media profiles

- Discuss scam awareness regularly with family members of all ages

- Enable advanced security features on your phone and accounts

- Have backup contact methods that don’t rely solely on phone calls

Being cautious about what you post online matters more than ever. Short voice clips from videos can be used to train cloning software. Consider who can access your family photos and location tags. Small changes in digital habits can significantly reduce your risk profile.

The Broader Picture: Technology and Trust

We’re at an interesting crossroads where tools designed to make life easier are also being weaponized. Voice assistants, deepfake videos, and AI chatbots show tremendous potential for good, yet the same capabilities enable new forms of fraud. Finding the balance between innovation and protection is crucial.

In my view, education plays the biggest role here. The more people understand how these scams work, the less effective they become. Sharing stories like the one from Montana helps others recognize the patterns and respond appropriately instead of reacting from fear.

Organizations are working on detection tools that can flag synthetic voices, but widespread deployment will take time. Until then, personal vigilance remains our best defense. This doesn’t mean living in constant suspicion, but rather approaching unexpected urgent requests with healthy skepticism.

Building Resilience in the Digital Age

Families can take proactive steps beyond just avoiding calls. Regular conversations about online safety, reviewing privacy settings together, and practicing verification scenarios can make everyone more prepared. Think of it as updating your family’s emergency plan for the modern world.

Consider creating a shared document with important contacts, medical information, and verification protocols. Having these ready reduces panic during high-stress situations. Role-playing different scam scenarios as a family exercise can also be surprisingly effective and even build closer bonds through shared preparedness.

Financial institutions and tech companies are developing better authentication methods, but individual awareness remains key. Never send money through unverified channels during emotional calls. Legitimate emergencies usually have multiple ways to confirm and help.

The emotional manipulation in these scams is what makes them so damaging. They don’t just steal money—they steal peace of mind and the simple joy of answering a call from someone you love. Reclaiming that sense of security requires both individual action and broader societal efforts.

Recognizing Common Patterns in Voice Scams

Most follow similar scripts. They create urgency with claims of immediate danger. They discourage verification by saying “don’t tell anyone” or “there’s no time.” They push for quick payment methods that are hard to reverse, like gift cards, wire transfers, or certain apps.

Pay attention to inconsistencies. Real family members in trouble usually provide specific details or respond naturally to questions only they would know. Scammers often get vague or aggressive when pressed for verification.

| Red Flag | Why It Matters |

| Unexpected urgent request for money | Legitimate needs have multiple confirmation channels |

| Pressure not to contact others | Isolates victim from reality checks |

| Refusal to answer specific verification questions | Indicates lack of genuine knowledge |

| Multiple hang-ups and callbacks | Maintains emotional pressure |

Understanding these patterns helps you pause and think even when emotions run high. That pause can be the difference between becoming a victim and staying safe.

Looking Ahead: Future of Communication Security

As AI capabilities continue advancing, we’ll likely see more creative applications in both positive and negative ways. The challenge for all of us is staying informed without becoming paralyzed by fear. Technology evolves quickly, but human connection and common sense remain powerful tools.

Perhaps the most encouraging aspect is how sharing experiences raises collective awareness. Each story that reaches more people makes the scams slightly less effective. Communities that talk openly about these threats become harder targets.

Ultimately, protecting our families in this new landscape requires a blend of old wisdom and new habits. Trust but verify has never been more relevant. The simple act of reaching out through multiple channels when something feels off can break the scammers’ carefully constructed illusions.

While the technology behind these threats feels intimidating, the solutions often come down to basic human practices—clear communication, established protocols, and looking out for one another. In an increasingly digital world, those fundamentals matter more than ever.

By staying aware and prepared, we can reduce the power these scams hold over us. The goal isn’t to eliminate all risk—that’s impossible—but to make sure we respond thoughtfully rather than fearfully when the phone rings with an unexpected call.

The experience of hearing what sounds like your child in danger is something no parent should go through. Yet by understanding how these operations work and taking practical precautions, we can protect the people we love and preserve the trust that makes family connections so valuable.